Deviating from the Guidance of Validation methods.

At the outset of his presentation, Andrew explained that “there is no tried and tested gold standard validation method”. Anyone embarking on the introduction of whole-slide imaging into their laboratory was free to make choices and to deviate from the guidance. The guidance, however, provides a useful framework for the validation process.

TERMINOLOGY

• Validation: A process to demonstrate that a new method performs as expected for its intended use and environment prior to using it for patient care where the overall goal is safe implementation. For example, with whole-slide-imaging, making sure that the pathologist can make accurate diagnoses to at least the same level as they could with glass slides on the light microscope.

• Verification: A process to obtain objective evidence that confirms specified performance requirements have been satisfied. For example, achieving acceptable diagnostic concordance between the whole slide imaging on glass slides, scanning completeness and accuracy,

Acknowledging that diagnostic pathology is an interpreted speciality Andrew recognised that it is legitimate to ask how this be validated? In addition, WSI has a lot of moving parts and is complex. It depends on hardware, software, and other external variables, such as histology, technologists, and pathologists.

So why would we validate whole slide imaging for clinical use?

Even though the tools have received approval from various regulators, the use of such a system for patient care, merits performance verification and validation before it is introduced. Benefits include:

1. Reassurance that whole slide imaging can be used for making accurate diagnoses across the spectrum of cases encountered in a particular laboratory or practice setting.

2. Identification of what needs to be optimized in terms of histology and scanner performance.

3. Establishment of optimal laboratory and diagnostic workflows.

4. Discovery of the limitations of the technology so that cases can be re-scanned or deferred to a glass slide review.

The review of the 2013 guidance took publicly available evidence and attempted to answer what type of cases should be included in the validation process, what should the “washout” period be and what is an ideal pass/fail mark? The new guideline also attempts to achieve balance with respect to the time and resources required to complete a validation process.

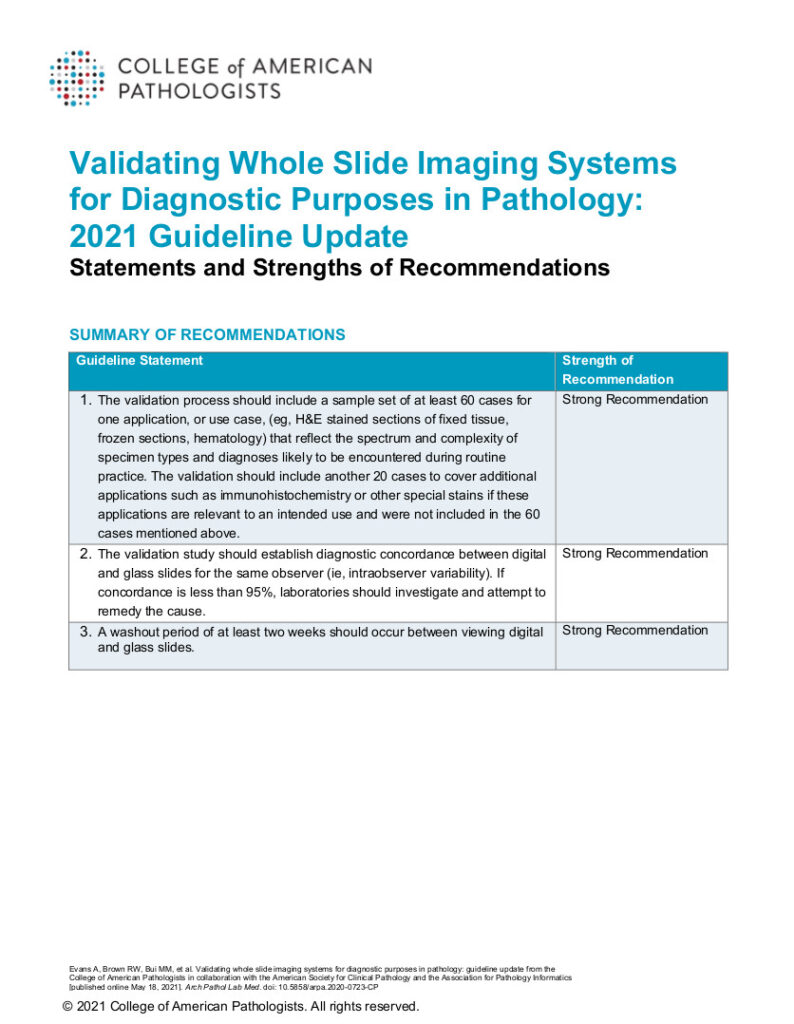

The result of the review were these three strong recommendations:

1. A sample set-up of at least 60 cases for one application or use case should be carried forward for a validation study. They should reflect the spectrum and complexity of cases likely to be encountered in the practice setting where the validation is being completed.

2. Establish diagnostics and coordinates between digital and glass slides for the same observer. As a benchmark, if concordance is less than 95%, it warrants additional investigation to remedy.

3. A washout period of at least two weeks between the viewing of digital and glass slides.

(The guidelines of 2013 and 2021 refer to visual interpretation only and do not cover image analysis or artificial intelligence tools. These are not routinely used in clinical practices yet and new guidelines will need to be developed in the future.)

Andrew Evans is the Chief of Pathology, Medical Director of Laboratory Medicine, Mackenzie Health; Chair of the College of American Pathologists whole slide imaging validation, guideline update committee and digital and computational pathology committee.